The Difference Between Knowing the Answer and Understanding the Problem

There is a question I was asked once in a technical debrief that I have never forgotten. We had just resolved a network outage — found the fault, fixed the problem, written it up. The client was satisfied. The job was done.

The engineer running the debrief looked at me and asked: "What was the actual problem?"

I gave him the answer. The physical fault. The component that had failed.

He shook his head. "That's what broke. What was the problem?"

It took me longer than I would like to admit to understand what he meant.

There is a distinction that sits at the heart of good technical reasoning, and it is one that most formal education manages to avoid almost entirely.

Knowing the answer is not the same as understanding the problem.

That sounds like a philosophical fine point. It isn't. It is one of the most practically consequential differences a person can learn to navigate — and your training, right now, is building the capacity to navigate it. Most people around you never will.

In most educational settings, the problem is given to you. It is clean, bounded, and pre-confirmed to have a solution. Your job is to find the answer the marking rubric already contains. This produces people who are very good at answering questions — and often genuinely helpless when the question itself hasn't been correctly formed yet.

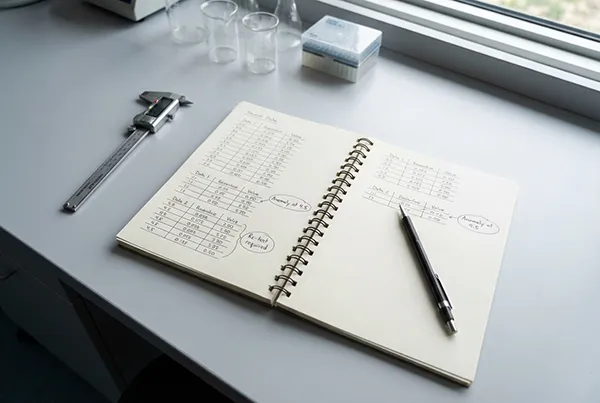

STEM training doesn't entirely escape this, but it leans against it. The lab leans against it harder. Because in a real laboratory environment, you are regularly confronted with results that don't match what you expected — and your first job is not to correct the result but to understand what that gap is telling you.

Was the methodology flawed? Was the sample compromised? Was the assumption wrong? Is this a meaningful result or measurement noise? Is the question you started with actually the right question?

These are not things a marking rubric gives you. You have to develop the instinct for them yourself.

I spent years watching highly credentialled people fail at this in expensive ways.

A project manager who knew exactly how to build a plan — but who had never stopped to check whether the plan was solving the right problem. An analyst who could produce a report of extraordinary technical quality — built on a question the client had asked badly and no one had thought to interrogate. A vendor who gave us a technically correct answer to a question we hadn't actually asked.

In each case, the knowledge was real. The answer was accurate. The problem was wrong.

That particular failure mode — arriving at the right answer to the wrong problem — is extraordinarily common and almost universally undetected, because the right answer looks like competence even when it is being applied to the wrong question.

STEM training catches this earlier than most. Not because STEM educators are necessarily better teachers, but because the methodology itself exposes it. When your result doesn't match your hypothesis, the first thing you are trained to ask is: was my hypothesis the right one? When your experiment fails to isolate a variable, the question isn't just "what went wrong" — it is "what was I actually testing?"

That reflex — the habit of interrogating the question before you commit to the answer — is one of the most valuable things a person can build. And most people never build it, because the educational systems they moved through never required it.

You are building it right now. Probably without noticing.

Every time you encounter an unexpected result and have to trace back through your methodology to find out what it means, you are practising this. Every time you have to decide whether a variable is significant or noise, whether a procedure was followed correctly or simply followed, whether the thing you measured was the thing you meant to measure — that is the skill being installed.

It does not feel like a profound life skill in the moment. It feels like an inconvenient result that needs to be explained before you can go home.

But the professional who can look at a problem, pause before assuming they know what the question is, and ask "wait — is this actually what we're trying to solve?" is operating at a different level to almost everyone around them. In any industry. At any level.

The answer is often the easy part. Most people with enough technical knowledge can find the answer to a well-formed question.

The harder thing — the rarer thing — is recognising when the question hasn't been well-formed yet. And doing something about it before the wrong answer gets acted on.

Your training is showing you how. The question is whether you're watching closely enough to see it happening.